Resolve “Job aborted due to stage failure” in Spark

When it comes to troubleshooting Spark issues. One thing you get used to it is knowing what the error exactly…

Blogs post related to Apache Spark

When it comes to troubleshooting Spark issues. One thing you get used to it is knowing what the error exactly…

In this article, we will understand and learn about the CoarseGrainedScheduler and why we are encountering this error in the…

In this article, we will learn about the “TypeError: an integer is required (got type bytes)” that occurs in PySpark…

Spark provides a lot of APIs to save DataFrame to multiple formats like CSV, Parquet, Hive tables, etc. In this…

I hope you have encountered a similar situation, Where you wanted to do some manipulation on a spark dataframe and…

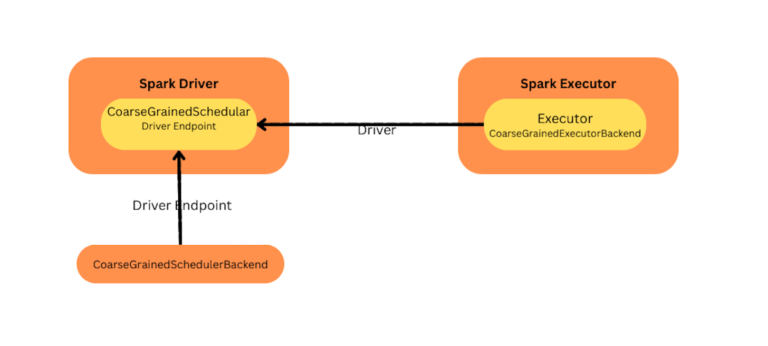

Drivers are the one that starts the spark context or session in Spark, which helps in communicating with resource managers and runs tasks in

Broadcast variables are commonly used by Spark developers to optimize their code for better performance. This article will provide a…

“Container killed by YARN for exceeding memory limits” usually happens, When the JVM usage goes beyond the Yarn container memory…

Reading/WRITING UTF-8 enabled file Sometimes, we could have encountered issues in which Spark returns non-ASCII characters in the wrong format….

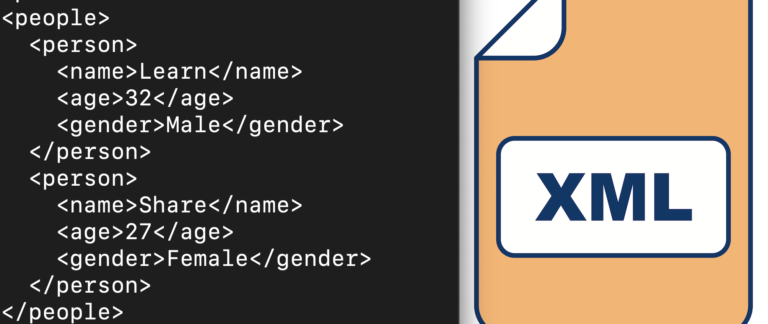

Apache Spark is a powerful data processing framework, Commonly, Spark is used to process data stored in various formats, including…

groupByKey and reduceByKey are the two different operations that help to transform RDD (Resilient Distributed Datasets). What is the difference…

Hello! If you’re into big data processing, you’ve probably heard of Spark, right? It’s a popular distributed computing framework used…

In multiple cases, We need to increase the Driver/executors memory/cores to improve performance or to avoid Out of Memory issues

One of the easiest ways to kill a Spark application is by issuing the “yarn kill” command

There are multiple use cases, Where we need to access Kudu from spark to store and retrieve data, In this…

Usually, the Yarn application will stuck in the ACCEPTED state, When it didn’t find enough resources to create a new container in the cluste

Apache Spark is a popular distributed computing framework for big data processing and Ozone is a distributed object store that…

Have you been wondering what the difference is between Apache Spark and Pyspark, and which one to use for big…

We often need to enable debug log level in the spark to understand the issue and troubleshoot, In this article,…

Jstack is a command line tool that helps to capture the thread dump of the java process. Using the thread…

What is Data skew? Let’s take a basic example of “CONSTRUCTION WORKERS“ In the above example: Skew happened due to…

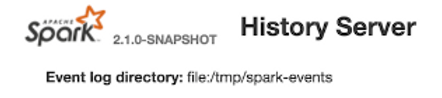

Sharing a step-by-step guide to the setup of the Spark history server locally (Mac or Windows). This helps to debug…

Short History of Spark: — Spark was created in Berkeley back in 2009 — An evolution of the MapReduce concepts…

Spark is a data processing framework that helps to process data faster. It uses in-memory and multiple nodes to run…

“Task serialization failed: java.lang.StackOverflowError” usually happens, When the JVM encounters a situation where it is unable to create a…

Kerberos debugging involves enabling debug log level for the Krb5LoginModule module at the JVM level, This would help us to…

We usually see the ERROR “org.apache.hadoop.hive.serde2.SerDeException: Unexpected tag” in Spark, When you are trying to connect the hive…

“failure: Total size of serialized results of x tasks (1024.5 MB) is bigger than spark.driver.maxResultSize (1024.0 MB)” in Spark

In this article, We will learn about memory overhead configuration in spark and explore more about spark.driver.memoryOverhead & spark.executor.memoryOverhead and…

“Futures timed out” is a common error that can occur when running Spark applications. In this article, We will learn,…

As we all know, Spark is an open-source, distributed processing framework used in big data, It helps perform analytics on…

Apache Spark is a powerful distributed framework that leverages in-memory caching and optimized query execution to produce faster results. The…

Spark is a powerful framework for processing large datasets in a distributed manner. In this article, we will discuss, how…

Spark is a distributed framework, Which uses in-memory computation power to process a large volume of data much faster. One…

OutOfMemoryError is not a surprise for spark as it is a memory-centric framework, To deal with memory issues, We need…